The $1 Trillion Misdirection: What NVIDIA Actually Announced at GTC 2026

Everyone's debating Jensen's trillion-dollar forecast. They're missing the real story: NVIDIA just became an operating system company. Our deep analysis of GTC 2026 — what it means for developers, startups, and everyone NVIDIA is about to squeeze.

Jensen Huang stood on the SAP Center stage for two hours and eighteen minutes last Sunday. He unveiled seven new chips, previewed a next-generation architecture, sent AI compute to literal outer space, and brought a walking, talking Olaf from Frozen onto the stage. The crowd — CNBC called it the "Woodstock of AI" — ate it up.

And then he casually dropped: $1 trillion in cumulative revenue from 2025 through 2027.

That's the number everyone latched onto. Financial media ran headlines. Analysts debated it. NVDA ticked up in post-market trading. Twitter went predictably feral.

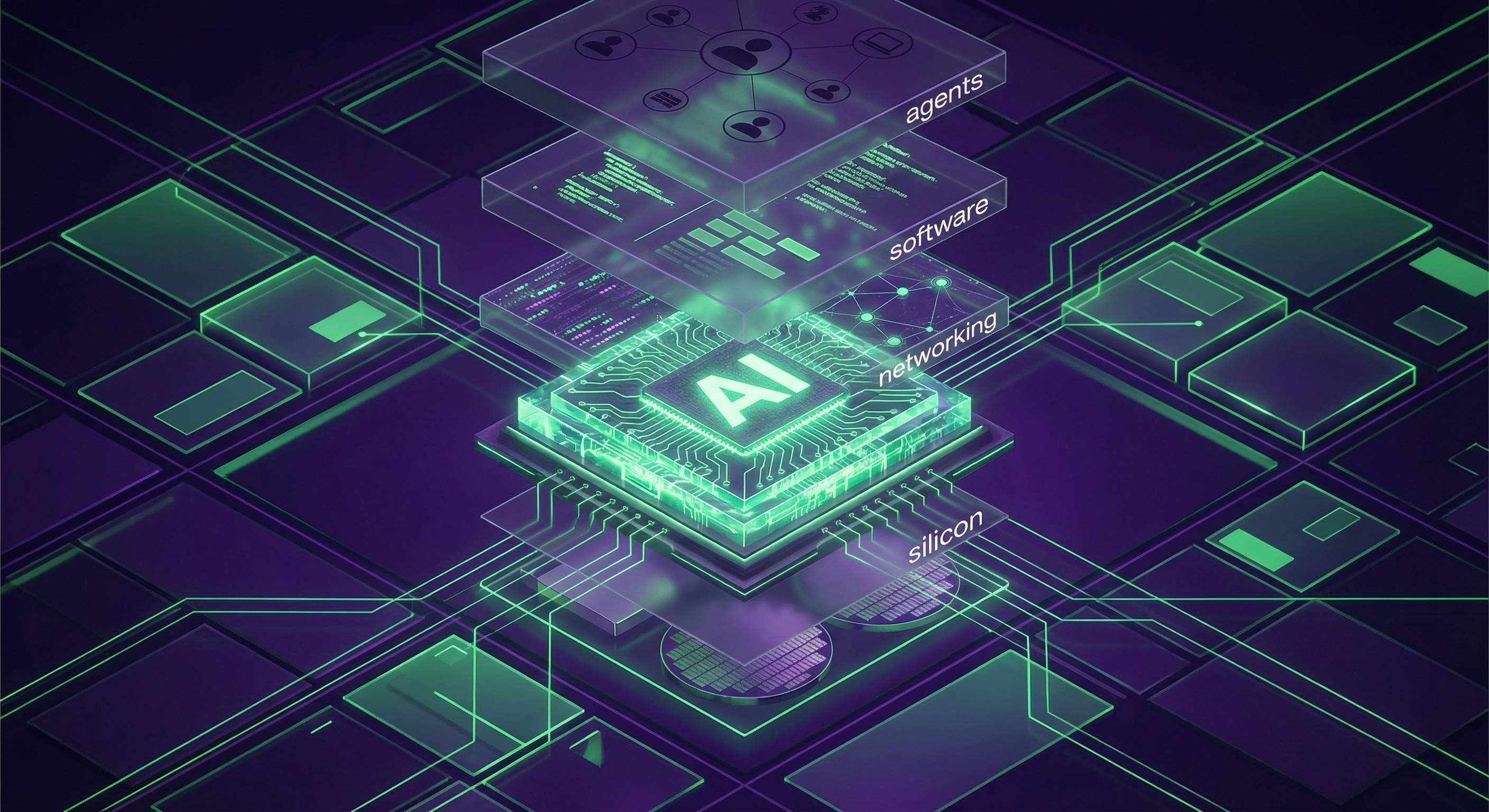

But the trillion-dollar number is the misdirection. It's the shiny object Jensen wanted you to focus on while he quietly announced something far more consequential: NVIDIA is no longer a chip company. As of GTC 2026, NVIDIA is building the operating system for the AI era — and they're doing it by vertically integrating every layer of the stack, from silicon to software to the agents that run on top.

Here's what actually happened, why it matters, and who's about to get squeezed.

The Headlines (Speed Round)

If you just want the bullet points, here's the 60-second version. But stay for the analysis — that's where it gets interesting.

Vera Rubin Platform — Seven new chips in full production: Vera CPU (88 custom Olympus cores), Rubin GPU, Groq 3 LPU, NVLink 6, ConnectX-9, BlueField-4, Spectrum-6. Five rack-scale systems. One supercomputer. Available H2 2026. Trains MoE models with 1/4 the GPUs vs. Blackwell. 10x higher inference throughput per watt. (NVIDIA Newsroom)

NemoClaw + OpenClaw — NVIDIA's enterprise agent platform. Single-command install of Nemotron models with OpenShell security runtime. Jensen's exact words: "OpenClaw is the operating system for personal AI." Partners include Adobe, Atlassian, Salesforce, SAP, Palantir, and basically everyone. (NVIDIA Press Release)

DLSS 5 — Neural rendering that understands scene semantics. Jensen called it "the GPT moment for graphics." Real-time at 4K. Starfield, Assassin's Creed Shadows, and 10+ titles at launch. Fall 2026. (NVIDIA Press Release)

Uber Robotaxi Deal — Full-stack NVIDIA DRIVE AV-powered robotaxis on Uber. 28 cities across 4 continents by 2028, starting LA and SF Bay Area in H1 2027. (NVIDIA Newsroom)

Space Computing — Yes, literally. AI data centers in orbit. Space-1 Vera Rubin Module with 25x compute vs. H100. Partners include Planet Labs, Axiom Space, and Starcloud. (NVIDIA Press Release)

Feynman Architecture Preview — Already teasing the next generation: Rosa CPU, LP40 LPU, BlueField-5, Kyber interconnect. Because Jensen always sells two roadmaps ahead.

📺 Watch: CNET's 12-Minute GTC Summary

Our Analysis: The Platform Play Nobody's Talking About

Thesis: NVIDIA Is Becoming the AI Operating System

Here's the shift that matters more than any chip spec: NVIDIA is moving from selling infrastructure to owning the platform layer.

Think about what Jensen actually announced:

- Hardware layer: Vera Rubin (the silicon)

- Runtime layer: OpenShell (the security/policy engine for agents)

- Model layer: Nemotron 3 family (the default AI models)

- Platform layer: NemoClaw + OpenClaw (the "OS" for AI agents)

- Application layer: AI-Q Blueprint, Agent Toolkit (the dev tools)

That's not a chip announcement. That's an operating system stack. Every layer designed to work together, optimized end-to-end, with third-party partners plugging into NVIDIA's ecosystem — not building their own.

Jensen wasn't subtle about this. He literally said:

"Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI."

The enterprise partner list for the Agent Toolkit reads like a Fortune 500 directory: Adobe, Atlassian, Box, Cadence, Cisco, CrowdStrike, Palantir, Red Hat, SAP, Salesforce, ServiceNow, Siemens. These aren't pilot programs. These are integrations. When Salesforce builds on NVIDIA's agent toolkit, that's a dependency that doesn't unwind easily.

Source: @PatrickMoorhead

Source: @PatrickMoorhead

Patrick Moorhead from Moor Insights put it well on X: "$NVDA just dropped the biggest forward demand signal..." — calling out the leap from $500B to $1T in cumulative demand. But even Moorhead focused on the demand number. The demand is a consequence of the platform lock-in, not the cause.

The Contrarian Take: Forget the Hardware. The Inference Shift Is the Story.

Every tech outlet led with Vera Rubin's specs. Seven chips! 10x inference throughput! 1/4 the GPUs for training!

Those numbers are impressive. They're also... expected. NVIDIA has delivered a generational chip improvement every 18-24 months for a decade. The market has priced this in.

What the market hasn't priced in: the shift from training to inference economics.

Here's what's actually new at GTC 2026:

The Groq 3 LPU (NVIDIA's inference accelerator — not to be confused with Groq Inc.) delivers 35x higher inference throughput per megawatt. A rack of 256 LPU processors with 128GB on-chip SRAM and 640 TB/s scale-up bandwidth, designed to make trillion-parameter model inference economically viable at scale.

The Vera CPU isn't just another server CPU — it's purpose-built for agentic AI workloads. 88 custom Olympus cores with Spatial Multithreading, 1.8 TB/s coherent bandwidth via NVLink-C2C. Jensen's framing was explicit: "The CPU is no longer simply supporting the model; it's driving it."

The BlueField-4 STX Storage Rack — the announcement nobody covered — provides AI-native KV cache optimization that boosts inference throughput by 5x.

See the pattern? Training gets a generational bump (1/4 GPUs for MoE — great). But inference gets a fundamental architectural rethink — custom silicon, purpose-built CPUs, optimized storage, all designed for the agentic AI workload pattern of continuous, low-latency, high-concurrency inference.

Why? Because the economics of AI are shifting. Training a frontier model is a one-time (well, periodic) cost measured in billions. But running that model — serving inference to millions of agents, 24/7, at latencies that feel instant — that's where the ongoing revenue is. NVIDIA knows this. The Groq 3 LPU exists because inference is the recurring revenue stream, and whoever wins inference economics wins the next decade.

Source: @ryanshrout

Source: @ryanshrout

Ryan Shrout nailed this framing on X: Vera Rubin is "a system architecture evolution rather than a chip that makes Blackwell obsolete." That's exactly right. It's not about the chip. It's about the system — and the system is optimized for inference-first workloads.

📺 Watch: Sam Witteveen's NemoClaw Deep-Dive

Developer Impact: NemoClaw Changes What You Build

Let's get practical. If you're a developer building AI-powered products, GTC 2026 had three announcements that directly affect your roadmap.

1. NemoClaw Makes Enterprise Agents Deployable (Finally)

The biggest friction in shipping AI agents to enterprise customers has been security, not capability. CISOs don't care that your agent can reason about quarterly reports — they care about data exfiltration, uncontrolled API calls, and agents that hallucinate their way into compliance violations.

NemoClaw addresses this head-on:

- Single-command install of Nemotron models + OpenShell runtime

- Privacy router for hybrid local/cloud inference — sensitive data stays on-prem

- Policy-based guardrails enforced at the runtime level, not the application level

- Security partnerships with Cisco, CrowdStrike, Google, Microsoft Security, TrendAI

The OpenShell runtime is the key piece. It's an open-source security layer that sits between the agent and the world, enforcing network policies, privacy rules, and safety constraints. Think of it as a firewall for AI agents — but one that understands what the agent is trying to do, not just what packets it's sending.

For developers, this means you can build agents that enterprises will actually approve for production. The OpenShell + NemoClaw stack handles the security theater that was previously 70% of your enterprise sales cycle.

2. AI-Q Blueprint Sets a Quality Bar

NVIDIA's AI-Q Blueprint is an open reference architecture for building research agents. It's currently top-ranking on DeepResearch Bench leaderboards, and it does something clever: it uses frontier models (Claude, GPT) for orchestration while offloading research queries to Nemotron — cutting query costs by 50%+.

This hybrid architecture pattern — expensive model for reasoning, cheap model for grunt work — is going to become standard. NVIDIA just codified it as a blueprint. If you're not already thinking about multi-model architectures for your agents, you're leaving money on the table.

3. Nemotron 3 Is Actually Competitive

The Nemotron 3 family (Ultra, Omni, VoiceChat) gets 5x throughput with NVFP4 on Blackwell hardware. The adoption list is telling: Cursor, CodeRabbit, CrowdStrike, ServiceNow, Perplexity. These aren't charity integrations — these are companies choosing Nemotron because the price/performance works.

The Nemotron Coalition (six model families across agentic, physical, healthcare, and scientific AI) is NVIDIA's play to make their models the default choice on their hardware. Not because they're the best models — they're not, and NVIDIA knows it — but because they're optimized for the stack. Same reason macOS apps run better on Apple Silicon.

Market Implications: Who Gets Squeezed

Here's where we get uncomfortable. NVIDIA's vertical integration strategy has winners and losers, and the losers list is longer than most analysts want to admit.

AMD: Running Out of Runway

AMD's MI400 series was announced at CES 2026 but isn't in production yet. Meanwhile, NVIDIA just shipped seven chips and five rack-scale systems — all available H2 2026. The competitive gap isn't just performance; it's ecosystem.

NVIDIA's NVLink has no competitive equivalent for scale-up interconnect. The DSX reference design with 200+ partners creates switching costs that AMD can't match with hardware alone. AMD needs a software story, and they don't have one. ROCm is getting better, but "getting better" doesn't win against "already dominant."

Intel: Not Even in the Conversation

Gaudi 3 is seeing limited adoption. Intel is restructuring. They're not a serious competitive threat at data center AI scale, and GTC 2026 made that painfully clear. When NVIDIA lists ecosystem partners, Intel doesn't come up. That silence speaks volumes.

Custom Silicon (Broadcom, Hyperscaler ASICs): The Real Threat — and NVIDIA's Counter

The actual competitive threat to NVIDIA isn't AMD or Intel — it's Google TPUs, Amazon Trainium, and Microsoft's Maia chips. Hyperscalers building custom silicon for their own workloads.

NVIDIA's counter at GTC 2026 was brilliant: make the full stack so integrated that cherry-picking one layer is more expensive than buying the whole thing. You want to use your custom ASIC for training? Great — but you'll need NVIDIA's NVLink for interconnect, BlueField for networking, Spectrum for switching, OpenShell for agent security, and NemoClaw for the enterprise agent platform. The stack is the moat.

Source: @PatrickMoorhead

Source: @PatrickMoorhead

This is exactly what Patrick Moorhead flagged pre-GTC: Vera Rubin shipping to hyperscalers isn't just a hardware deal — it's a platform commitment.

Cloud Providers: Frenemies Getting More Frenemy

AWS, Google Cloud, Azure, Oracle, CoreWeave — they're all listed as Vera Rubin cloud partners. They're also all building competitive silicon. The relationship is increasingly tense: cloud providers need NVIDIA's hardware because customers demand it, but every NVIDIA software layer (NemoClaw, OpenShell, Agent Toolkit) is a layer the cloud provider doesn't control.

Watch for cloud providers to push harder on their own agent frameworks as a counter to NemoClaw. But NVIDIA has a head start — and 16 enterprise SaaS partners already integrated.

The Spectacle Moments (Because Jensen Knows Entertainment)

Two moments from the keynote deserve mention, not because they're the most important announcements, but because they reveal Jensen's genius for narrative.

Olaf Walked

A walking, talking Olaf from Frozen waddled onto stage, powered by NVIDIA's Jetson hardware, Newton physics engine, and Omniverse simulation. It was silly. It was charming. It was the most-shared clip from GTC 2026 (183K views in four days). And it demonstrated physical AI capabilities more effectively than any spec sheet could.

Jensen told Olaf: "I gave you your computer — Jetson... you learned how to walk inside Omniverse." Behind the Disney charm, that sentence describes an entire embodied AI pipeline: train in simulation, deploy on edge hardware, run in the real world.

Space: The Vision Play

AI data centers in orbit sounds like sci-fi marketing. And honestly, at less than 1% of near-term revenue, it kind of is. But there's real logic: satellite constellations generate massive data, downlinking it for processing is a bandwidth bottleneck, and on-orbit inference solves it. Planet Labs processing Earth imagery in real-time from space is genuinely useful. The 25x compute improvement over H100 for SWaP-constrained environments isn't trivial engineering.

But let's be honest: Jensen said "Space computing, the final frontier, has arrived" with a straight face. The man knows how to sell a vision.

The $1 Trillion Question (Let's Actually Do the Math)

Since everyone's going to ask: is the $1 trillion cumulative revenue forecast realistic?

NVIDIA's FY2025 revenue was approximately $130 billion. To hit $1T cumulative through FY2027, they need roughly $400-450B average for the remaining fiscal years. That implies ~3x revenue growth — aggressive, but not insane given current trajectory and the Vera Rubin ramp.

The bull case: Jensen cited $150B in AI VC funding in the last year and computing demand that's "increased by 1 million times." Every major cloud provider is in a capex arms race. Sovereign AI initiatives worldwide are building national compute infrastructure on NVIDIA silicon. The demand drivers are real and accelerating.

The bear case: $1T is cumulative, not annual. The market is pricing in perfection. History tells us that trillion-dollar forecasts from CEOs should be discounted 20-30%. And the custom silicon threat from hyperscalers isn't going away — Google's TPU v6e, Amazon's Trainium, and Microsoft's Maia all chip away at NVIDIA's cloud market share over time.

Our take: The number is achievable but not guaranteed. The more important question isn't whether NVIDIA hits $1T — it's whether the platform strategy (NemoClaw, OpenShell, Agent Toolkit) creates enough lock-in to sustain premium margins even as hardware competition intensifies. That's the real bet.

📺 Watch: The Information: $1T Forecast Analysis

For a sharper debate on the numbers, The Information's analyst panel — featuring Futurum Group and Hydra Host — breaks down the bull and bear cases here.

📖 Read: FundaAI: NVIDIA is Rewriting the AI Factory Playbook

For the structural thesis on why NVIDIA is rewriting the AI factory model entirely, FundaAI's deep analysis covers the Vera Rubin platform architecture, Groq LPU economics, and photonics strategy in detail worth reading.

📖 Read: ARMR Investing: The Inference Era Changes Everything

ARMR Investing's comprehensive breakdown frames GTC 2026 as the start of the "Inference Era" — which tracks with our analysis that the training-to-inference shift is the real story.

Our Prediction: Where This Leads in 12-18 Months

We'll take a stance. Here's what we think happens next:

1. NemoClaw Becomes the Default Enterprise Agent Stack (6-12 months)

The enterprise partner list (Adobe, Salesforce, SAP, ServiceNow, Palantir) isn't decorative — these are committed integrations. By Q4 2026, NemoClaw will be the default agent runtime for enterprises that already run on NVIDIA hardware. Which is... most of them. OpenShell's security model solves the CISO objection that's blocked agent adoption for 18 months. Expect a wave of "enterprise AI agent" product launches in H2 2026, most built on NemoClaw under the hood.

2. Inference Economics Flip the Cloud Business Model (12-18 months)

The Groq 3 LPU's 35x throughput-per-megawatt improvement makes trillion-parameter model inference economically viable at scale. This changes the cloud business model: inference moves from a cost center to a profit center. Cloud providers that adopt Vera Rubin + Groq 3 racks can offer inference pricing that undercuts competitors still running on general-purpose GPUs. Watch for aggressive inference pricing wars in H1 2027.

3. NVIDIA's Software Revenue Becomes Material (18-24 months)

This is the big one. Right now, NVIDIA's revenue is overwhelmingly hardware. But NemoClaw, OpenShell, Agent Toolkit, Nemotron models, DGX Cloud — these are all software and service revenue streams. As the platform matures and enterprise adoption grows, NVIDIA's software revenue will start showing up as a meaningful line item. When Wall Street starts valuing NVIDIA with a software multiple on top of hardware, the stock re-rates.

4. The Custom Silicon Threat Doesn't Kill NVIDIA — It Segments the Market

Google, Amazon, and Microsoft will continue building custom chips for their own internal workloads. But the other 90% of enterprise compute — companies that don't have 10,000-person silicon teams — will run on NVIDIA's stack. The market segments: hyperscaler internal workloads migrate partially to custom silicon, everything else consolidates on NVIDIA. Net effect: NVIDIA's market share dips slightly in absolute terms but their revenue continues growing because the overall market expands 3-5x.

The Bigger Picture: Why This Infrastructure Matters

Here's a thought worth sitting with: NVIDIA's inference-first architecture isn't just about serving chatbots faster. It's about enabling an entirely new category of AI systems — ones that improve themselves.

We recently covered how MiniMax's M27 demonstrated self-evolving AI agents — models that autonomously tune their own parameters, optimize workflows, and detect failure loops without human intervention. These systems are inference-hungry by design: every self-improvement cycle is a tight loop of generate → evaluate → adapt → repeat. The infrastructure NVIDIA announced at GTC — Vera Rubin's inference throughput, Groq 3's deterministic latency, NemoClaw's orchestration layer — is exactly the kind of stack these self-compounding agents need to operate at scale.

NVIDIA may not have used the phrase "self-evolving AI" on stage. But their $1 trillion demand forecast only makes sense in a world where AI agents don't just serve requests — they compound their own capabilities. And that world is arriving faster than most people realize.

The Bottom Line

GTC 2026 wasn't a product launch. It was a platform declaration.

NVIDIA is building the iOS of AI infrastructure — vertically integrated, opinionated, designed to make the full stack work together so seamlessly that extracting any single layer becomes more trouble than it's worth. The $1 trillion number is the headline. The NemoClaw + OpenShell + Agent Toolkit stack is the strategy. And the inference shift — from training-dominant to inference-dominant workloads — is the economic engine that makes it all work.

Jensen Huang didn't just announce cool hardware last Sunday. He announced that NVIDIA intends to be the platform company for the AI era, the way Microsoft was for the PC era and Apple is for the mobile era. Whether he pulls it off depends on execution. But after watching that keynote, we wouldn't bet against him.

Watch the full keynote (2hr 18min, 42 chapters) or CNET's 12-minute summary if you're short on time.

Sources and Further Reading:

- NVIDIA Vera Rubin Platform — Official press release

- NVIDIA Vera CPU — Vera CPU details

- NemoClaw Announcement — Enterprise agent platform

- NVIDIA AI Agents Platform — OpenShell, AI-Q, Agent Toolkit

- DLSS 5 Press Release — Neural rendering

- DRIVE Hyperion L4 + Uber — Autonomous vehicles

- Space Computing — Orbital AI

- Open Models Expansion — Nemotron 3, Cosmos 3, GR00T

- GTC 2026 Live Blog — NVIDIA's running coverage

- FundaAI Analysis — AI Factory Playbook thesis

- ARMR Investing Breakdown — Inference Era analysis

- HN Discussion: Vera Rubin — Developer community reaction

About ComputeLeap Team

The ComputeLeap editorial team covers AI tools, agents, and products — helping readers discover and use artificial intelligence to work smarter.

💬 Join the Discussion

Have thoughts on this article? Discuss it on your favorite platform:

Related Articles

Google's $40B Anthropic Bet: What It Means for Developers

Google's $40B Anthropic investment loops back as Google Cloud spend. Here's what it means for developers building on Claude.

Meta's Real Story Isn't the Layoffs. It's the Surveillance.

Meta cut 10%, Microsoft bought out 7%, Block gutted 40%. But the bigger story is Meta watching its own staff with AI to replace them.

Anthropic's $100B Clock: Dominance Has a 6-Month Fuse

Anthropic dominates 7 of 8 intelligence sources — but Codex hit 4M users and Sergey Brin now runs Google's catch-up team. Polymarket sees the clock.

The ComputeLeap Weekly

Get a weekly digest of the best AI infra writing — Claude Code, agent frameworks, deployment patterns. No fluff.

Weekly. Unsubscribe anytime.